Personal Development Series

There is a growing phenomenon that is difficult to detect at first, largely because it feels so productive. People are writing faster, speaking more clearly, and presenting ideas with a level of polish that would have required years of practice not long ago. The friction that once slowed output has been dramatically reduced, and with it, a new form of confidence has quietly taken hold.

It does not come from mastery in the traditional sense. It comes from assistance.

A professional can now generate a strategic memo in minutes, refine language with precision, and present complex ideas with clarity that rivals seasoned experts. The output is impressive, often indistinguishable from work produced through deep experience.

And yet, beneath that output, there is a growing question. Does the individual truly possess the competence that the output suggests?

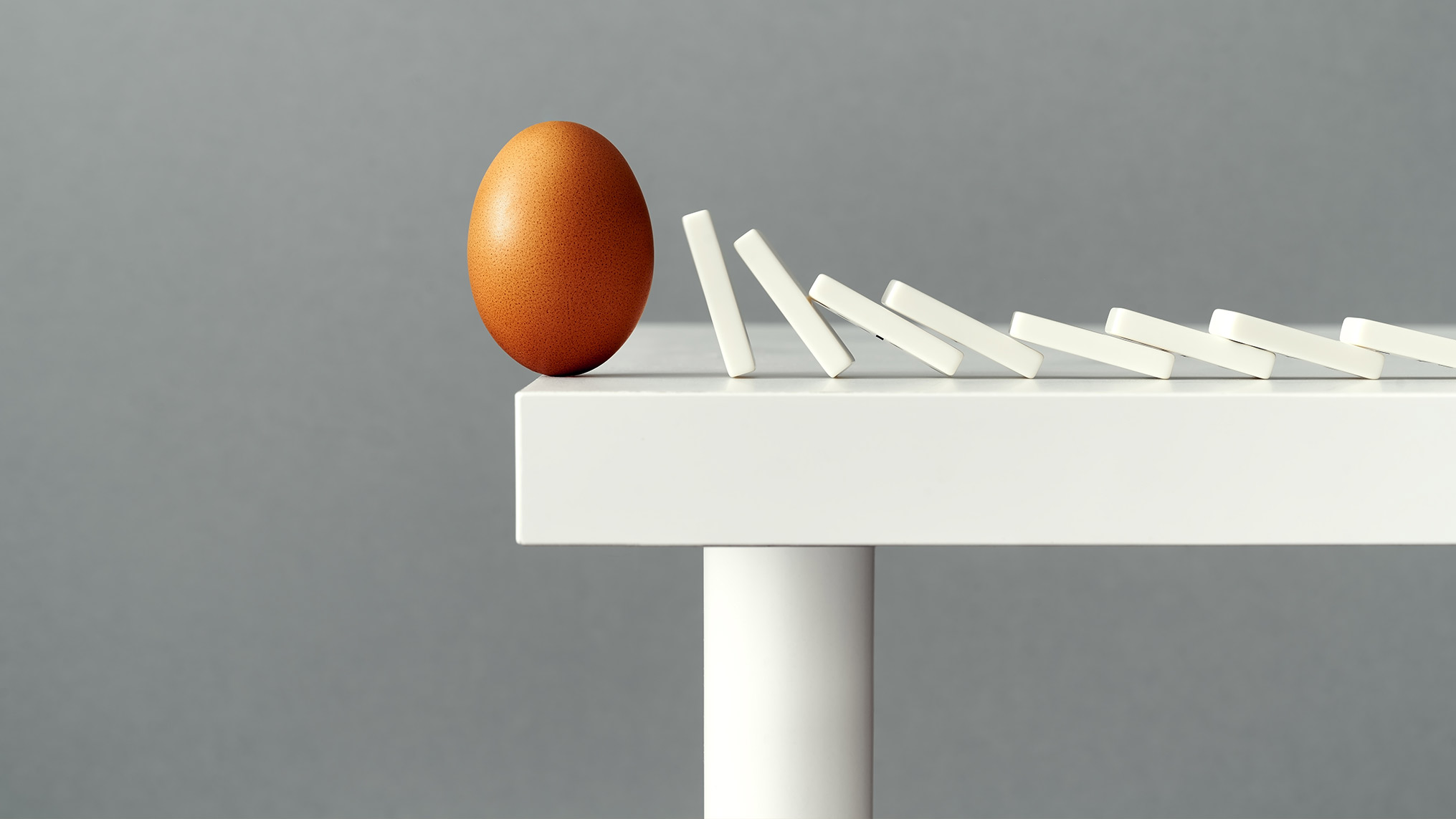

This is the paradox of the moment. AI is not just amplifying what we can do. It is reshaping how we perceive what we are capable of doing. In doing so, it introduces a subtle but significant risk, the illusion of competence.

The Historical Link Between Effort and Ability

For most of human history, confidence was built through effort. Skills were developed over time, through repetition, failure, and gradual improvement. The process was often slow and, at times, frustrating, but it provided a reliable signal. The more effort required to produce something, the more likely it was that the individual had internalized the underlying capability.

This relationship between effort and ability was not perfect, but it was directionally accurate. It created a form of feedback that helped individuals calibrate their own competence. When something was difficult, it revealed the limits of understanding. When it became easier, it reflected genuine progress.

AI disrupts this relationship.

By reducing the effort required to produce high-quality outputs, it weakens the connection between what is created and what is actually understood. The external signal remains strong, the output looks polished, structured, and insightful, but the internal signal becomes less clear.

The individual may feel more capable, even when their underlying skill has not meaningfully changed.

Fluency Without Foundation

One of the defining characteristics of AI-assisted work is fluency. Language models produce responses that are coherent, articulate, and well-organized. They can structure arguments, draw connections, and present ideas in a way that feels intellectually rigorous.

When individuals engage with this fluency, they often adopt it. They refine it, personalize it, and present it as their own thinking. Over time, this creates a sense of familiarity with concepts that may not have been deeply processed.

This is where the illusion begins to form.

Fluency is often mistaken for understanding. The ability to articulate an idea clearly can create the impression that the idea has been fully internalized. In reality, articulation and comprehension are related but distinct.

True understanding involves the ability to apply knowledge in unfamiliar contexts, to adapt it under pressure, and to recognize its limitations. These capabilities are developed through experience, not just exposure.

When AI provides the articulation without requiring the underlying cognitive work, it becomes possible to sound competent without being fully competent.

The Psychology of Overconfidence

This dynamic aligns with well-documented psychological patterns. The Dunning-Kruger effect, for example, describes how individuals with limited knowledge in a domain often overestimate their competence, while those with deeper expertise tend to be more aware of their limitations.

AI has the potential to amplify this effect.

By providing high-quality outputs regardless of the user’s level of understanding, it can elevate the perceived competence of individuals who may not yet have the depth to match it. The feedback loop that typically corrects overconfidence, struggle, failure, and the need to revise thinking, becomes less frequent.

Instead, individuals receive consistent reinforcement. Their outputs are effective, their ideas are well-received, and their confidence grows accordingly.

The problem is not confidence itself. Confidence is essential for action and leadership. The problem is when confidence becomes detached from capability.

At that point, it is no longer a signal. It is a distortion.

When the Test Comes

The true measure of competence is rarely the initial output. It is the ability to perform under conditions where assistance is limited or absent.

This is where the gap between perceived and actual competence becomes visible.

In situations that require real-time decision-making, nuanced judgment, or the ability to navigate unexpected challenges, individuals cannot rely on pre-constructed responses. They must draw on their own understanding.

If that understanding has not been developed, the limitations become apparent.

This is particularly relevant in leadership and high-stakes environments. Decisions often need to be made quickly, with incomplete information, and with consequences that extend beyond the immediate context. The ability to think independently, to question assumptions, and to adapt in real time becomes critical.

AI can support these processes, but it cannot replace the need for internal capability.

When individuals have become accustomed to relying on external assistance, the transition to independent thinking can feel abrupt and uncomfortable.

The Subtle Erosion of Learning

Perhaps the most concerning aspect of this shift is not the immediate illusion of competence, but the long-term impact on learning.

Learning is inherently inefficient. It involves trial and error, repeated exposure, and the gradual building of mental models. It requires time, attention, and a willingness to engage with difficulty.

AI offers a more efficient alternative. It provides answers quickly, structures thinking, and reduces the need for prolonged struggle.

While this efficiency is valuable, it can also reduce the depth of engagement. When answers are readily available, the incentive to explore a problem fully diminishes. The individual may arrive at a solution without fully understanding how or why it works.

Over time, this can lead to a shallower form of knowledge. Individuals become proficient at navigating tools and producing outputs, but less practiced in developing deep, transferable understanding.

The risk is not that learning stops. It is that it becomes more surface-level.

Competence as a Lived Experience

True competence is not just about what can be produced. It is about what can be carried.

It is the ability to operate without assistance, to adapt knowledge to new situations, and to make decisions when the path forward is unclear. It is built through experience, through moments of uncertainty, and through the process of working through problems without immediate resolution.

This is why effort has historically been such an important component of development. It forces engagement. It creates conditions where understanding must be constructed, not simply accessed.

AI changes the environment in which this construction takes place.

The challenge is not to reject the tool, but to remain aware of what it is and is not providing. It can accelerate output, enhance clarity, and expand access to information. It cannot replace the internalization that comes from doing the work.

Competence, in this sense, remains a lived experience.

Recalibrating Confidence in an AI World

If the illusion of competence is a real risk, then the question becomes how to recalibrate confidence in a way that reflects true capability.

One approach is to reintroduce friction intentionally. This might involve periods of working without assistance, engaging deeply with problems before seeking external input, or testing understanding in environments where immediate support is not available.

Another approach is to focus on application rather than articulation. Instead of evaluating competence based on how well ideas are expressed, greater emphasis can be placed on how effectively they are applied in real-world contexts.

Feedback also becomes more important. Honest, critical evaluation from others can help counterbalance the reinforcing effects of AI-assisted output. It provides an external perspective on whether the perceived competence aligns with actual performance.

Ultimately, it requires a shift in mindset. Confidence should be viewed not as a byproduct of output but as a reflection of capability.

The Cost of Easy Competence

We are entering an era where it is easier than ever to produce work that looks competent. This is, in many ways, a remarkable advancement. It lowers barriers, accelerates progress, and enables individuals to operate at a higher level.

But it also introduces a subtle cost.

When competence can be simulated, it becomes harder to distinguish from the real thing. When confidence is reinforced by polished outputs, it becomes less reliable as a signal of ability.

The challenge is not to abandon these tools, but to use them with awareness. To recognize that the ease they provide can obscure the work that still needs to be done.

Because in the end, the moments that matter most are not the ones where we have time to refine and edit. They are the ones where we must think, decide, and act in real time.

And in those moments, confidence that has not been earned has a way of revealing itself.

If You Liked This Article, You May Also Like …

- Convenience vs Growth: AI Is Removing the Friction That Builds You

- Intellectual Agency: The Most Important Skill in the Age of AI

- Delayed Self-Trust: How Outsourcing Decisions Quietly Erodes Identity